There's a question I get asked more than any other when I describe MUSE: "So it's AI-generated storytelling?"

No. And the distinction matters more than anything else in the architecture.

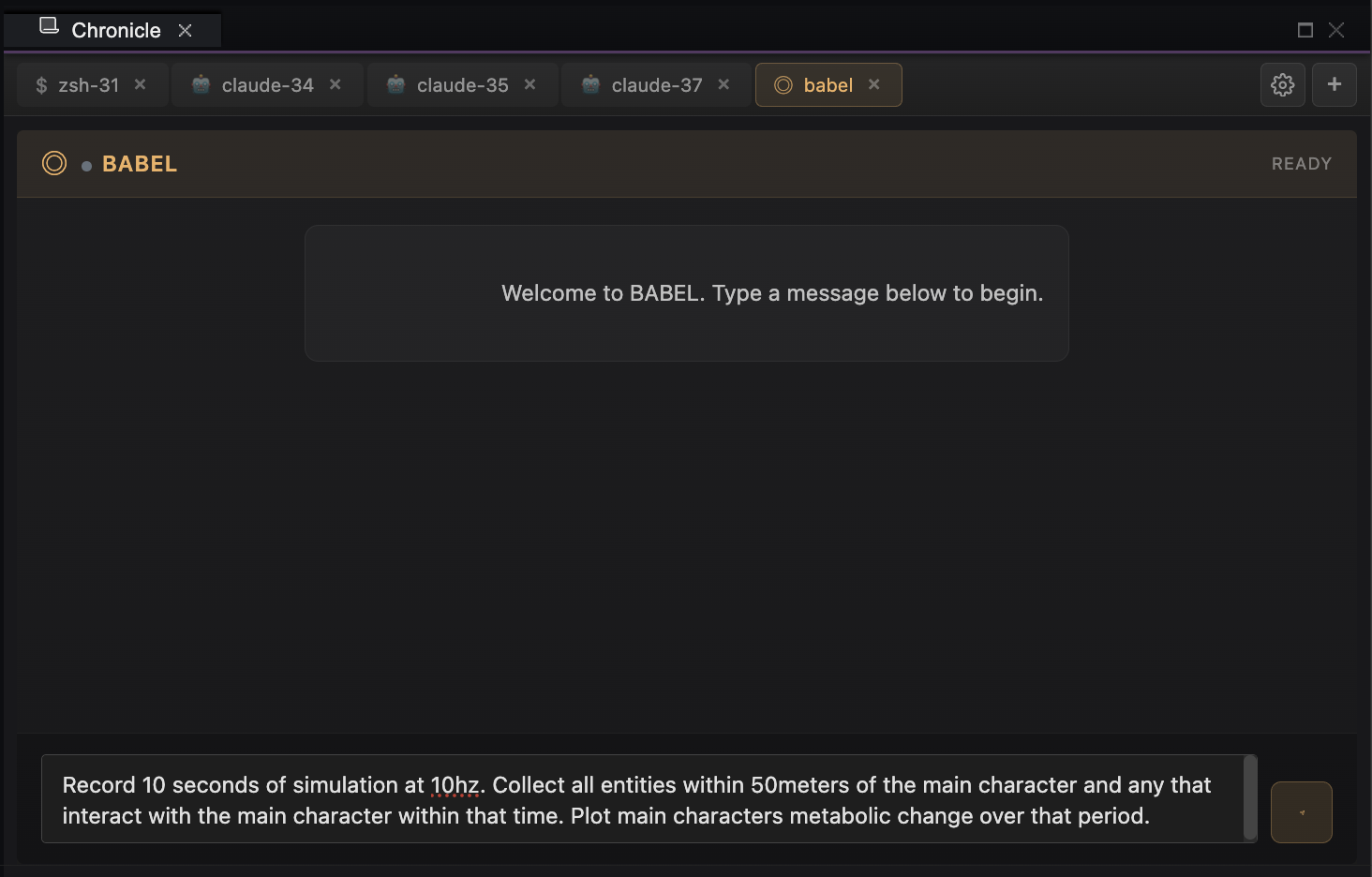

MUSE Living Worlds are deterministic simulations. Every state transition, every decision, every consequence traces back to explicit causal systems. LLMs don't generate the story. They translate it. BABEL is the layer that makes that possible—and understanding what it does (and doesn't do) explains why MUSE works differently than anything else in the "AI storytelling" space.

The Problem With AI-Generated Narrative

Let's be direct about what LLMs are: sophisticated pattern completion engines. They predict plausible next tokens based on training data. They're extraordinary at generating text that feels coherent. They're terrible at maintaining truth over time.

Ask an LLM to run a simulation and you get:

- Hallucinated state. Characters "remember" things that never happened. Events contradict earlier events. The world drifts.

- No causal grounding. Why did the blacksmith get angry? Because it seemed narratively appropriate—not because his daughter is sick, he hasn't slept, and you just insulted his craft in front of customers.

- Context collapse. After enough conversation, the model forgets. Or summarizes. Or invents. The world stops being consistent.

For a chatbot, this is fine. For a world you return to after a week expecting continuity, it's fatal.

I knew from the start that MUSE couldn't be built on probabilistic generation. The simulation had to be deterministic. Every state change had to be traceable. The world had to be true—not plausible. True.

But I also knew natural language was the right interface. Players shouldn't learn command syntax. They should just talk.

The question was: how do you get the accessibility of natural language without the unreliability of letting an LLM run the show?

The Insight: Translation, Not Generation

The breakthrough came when I stopped thinking of LLMs as generators and started thinking of them as translators.

A translator doesn't invent meaning. They convert meaning from one form to another. The source material is authoritative. The translation serves it.

That's BABEL's role. It sits at the boundary between human language and simulation state—converting in both directions, but never creating the truth it describes.

Inbound: Player says "I want to search under the bed." BABEL parses intent, resolves "the bed" against the entity registry, validates the action is possible, and dispatches a structured command to FONT. The simulation runs. BABEL doesn't decide what's under the bed. The world already knows.

Outbound: FONT updates state—you found a journal, the owner's stress response triggers, dust particles enter the air affecting visibility. BABEL translates that state delta into prose: "Dust billows as you drag out a leather journal, its spine cracked with age. From the doorway, you hear the floorboard creak. Someone's watching."

The LLM generates the words. It doesn't generate the facts. The facts come from FONT, and FONT is deterministic.

Grounded Narration

I started calling this pattern "grounded narration." The LLM is grounded in simulation truth. It can describe, interpret, add texture—but it cannot contradict. It cannot invent. It cannot hallucinate state that doesn't exist.

This is enforced architecturally:

- BABEL receives structured state from FONT, not free-form context

- The prompt includes explicit constraints: describe only what the simulation provides

- Output is validated against known state before delivery

- If there's ambiguity, BABEL asks for clarification rather than guessing

The result is prose that feels authored—rich, adaptive, voice-consistent—but remains causally honest. You can trace any narrative moment back to simulation state. You can inspect why something happened. The story is emergent, but it's not made up.

One System, Many Masks

BABEL isn't just the narrator in GLYPH. It's the same infrastructure running behind every tool in the ecosystem, wearing different masks.

In GLYPH (reader interface): BABEL is the narrator. It translates player intent into simulation commands and simulation state into immersive prose. It maintains voice consistency for the world—tone, vocabulary, rhythm—while staying grounded in what's actually true.

In CYPHER (development workbench): BABEL is a development assistant. "Show me everyone within 50 meters who's hungry and afraid" becomes a registry query, executes against FONT, returns structured results, and BABEL interprets: "Found 12 entities. Highest fear-hunger correlation in the northeast cluster—three children near the collapsed granary."

In CLIO (development environment): BABEL helps me interrogate my own codebase. "What systems depend on the memory consolidation heuristic?" parses into a SIBYL query, traverses the dependency graph, and returns in plain English. I'm talking to my architecture.

One semantic layer. Multiple interfaces. The registry underneath is the same—BABEL just adjusts its persona and output format based on context.

The Registry Makes It Work

BABEL's power comes from what it dispatches to: a typed command registry that knows every valid operation in the ecosystem.

Here's how it works:

- Build time: PYNAX reflects over the C++, Go, and Python APIs, extracting every callable operation with its parameters and constraints

- Schema generation: Those operations get written to SQLite as typed command definitions

- Runtime: The registry loads to cache. BABEL parses natural language against it—matching intent to valid commands, resolving entity references, validating parameters

- Dispatch: Valid commands execute. Invalid ones get clarification requests, not guesses.

This means BABEL can only do things the simulation actually supports. It can't hallucinate capabilities. If there's no "teleport" command registered, asking to teleport gets a graceful "I don't know how to do that" rather than an invented response.

The registry is the contract. BABEL honors it.

Why Local LLMs Matter

BABEL supports multiple model backends: Claude, GPT-4, and local models through Ollama. The local option isn't just about cost—it's about reliability and privacy.

Offline capability: Living Worlds should work without internet. Players shouldn't lose access to their save because an API is down. Local models mean the translation layer runs on-device.

Privacy: Your choices, your narrative, your world. None of it needs to leave your machine. The simulation is local. The narration can be too.

Latency: For real-time interaction, local models eliminate round-trip delays. The translation feels instant because it is.

The multi-model architecture also provides fallback. If one provider is unavailable, BABEL routes to another. The player never sees the infrastructure—they just see consistent, responsive prose.

What BABEL Doesn't Do

It's worth being explicit about the boundaries:

- BABEL doesn't simulate. FONT does. BABEL translates.

- BABEL doesn't decide outcomes. The heuristics in FONT do. BABEL describes them.

- BABEL doesn't maintain world state. The database does. BABEL queries it.

- BABEL doesn't have memory. FONT's memory systems do. BABEL surfaces them.

Every creative writing instinct says the narrator should have agency. BABEL deliberately doesn't. Its job is to serve the truth, not to improve it. The simulation is the author. BABEL is the voice.

The Divide That Matters

The AI industry is racing toward "AI-generated everything." Procedural content. Dynamic stories. Infinite narrative.

Most of it is vapor. Impressive in demos, incoherent over time. The moment you care about continuity—the moment you want to return to a world and have it remember—the cracks show.

MUSE takes the opposite bet: deterministic simulation as the foundation, AI as the interface. The hard part isn't generating plausible text. The hard part is maintaining truth at scale. That's what FONT does. BABEL just makes it legible.

The divide between deterministic simulation and black-box AI isn't a limitation. It's the architecture. It's why Living Worlds can be worlds—persistent, causal, yours—rather than elaborate chatbots wearing narrative costumes.

What I Learned

Constraints enable trust. By explicitly limiting what BABEL can do, players can trust what it says. The narrator can't lie because it doesn't know how.

Translation is underrated. The industry obsesses over generation. But the real unlock for natural language interfaces is reliable translation—taking fuzzy human intent and mapping it to precise system operations.

Architecture is philosophy. The decision to keep LLMs out of the simulation loop isn't just technical. It's a statement about what kind of stories matter: the ones that emerge from systems, not the ones that are invented on demand.

The hardest part is saying no. Every week I think of something cool BABEL could generate if I let it. Character backstories. Dynamic dialogue trees. Invented histories. And every week I remember: that's not what makes MUSE different. The simulation is the story. BABEL just tells it.

What's Next

This is the second post in a series about building the MUSE Ecosystem. The first covered FONT and the origin story. Next, I'll write about Living Worlds themselves—the philosophy behind emergent narrative and what it means to build stories that happen rather than stories that are told.

BABEL is the bridge. FONT is the truth. The world is what emerges when you let them work together.

This post is part of a series on building the MUSE Ecosystem. Follow for updates on the architecture, the philosophy, and the path toward Living Worlds.