A decade ago, I co-founded a startup building ML-based health tech products, starting with wearable activity recognition. I had domain expertise in neuroscience and biomechanics. I could code. But I had essentially no machine learning experience beyond basic concepts.

Looking back, I realize: Epoche is the tool that would have fast-tracked my entry into ML. Not because it would have made me a better engineer, but because it would have let me apply my domain expertise immediately, while I was still learning the mechanics.

This isn't a problem with the tools themselves—sklearn, SHAP, TensorFlow are exceptional. But there's a gap between what these libraries can do and who can effectively use them. That gap limits the use of ML interpretability as a tool to understand data within the context of domain expertise.

The False Dichotomy We Accept

There's an unspoken assumption in machine learning: if you're serious about it, you learn to code the full stack. If you can't implement your own interpretability pipeline, you're not really doing ML.

I've watched this happen repeatedly. Capable researchers—people who think deeply about their data—hear "we need to train a neural network" and disengage. They don't feel entitled to weigh in on model architecture, feature engineering, or interpretation.

Some of the most important questions in applied ML come from people who think narratively, not algorithmically. Clinicians who recognize patterns in patient outcomes. Neuroscientists who understand what features should matter based on decades of research. These people don't need to implement Gradient Boosting from scratch. They need to understand what the model learned, question whether it makes sense, and contribute their domain expertise.

The barrier isn't the algorithms. The barrier is fragmentation. You train a model in one script. Calculate SHAP values in another. Generate permutation importance somewhere else. Six months later, you can barely reconstruct what you did, let alone why.

We've mistaken implementation for understanding, and left brilliant minds on the sidelines because they don't happen to enjoy stitching together scattered tools.

Interpretability Is Translation

Here's the realization that shaped Epoche: interpretability is translation, not computation.

The model has already done the math. The hard part isn't calculating SHAP values—those are solved problems. The hard part is making that information meaningful to someone who needs to act on it.

Before (typical ML tool output):

Feature importance:

- Fz_theta_power: 0.82

- Pz_alpha_power: 0.67

- C3_beta_power: 0.54

With Epoche: "The model consistently identified frontal midline theta (Fz, 4-8Hz) as the strongest predictor (SHAP = 0.82). This aligns with established neuroscience: theta oscillations in medial prefrontal cortex are associated with cognitive control. Your classifier may be detecting attention-related differences between conditions.

Secondary importance in parietal alpha (Pz, 8-13Hz) suggests possible working memory involvement. The spatial pattern—frontal theta + parietal alpha—is characteristic of task-engagement networks.

Note: C3 beta showed moderate importance. This is left sensorimotor cortex; verify this isn't capturing motor preparation artifacts from button presses."

This is what researchers need. Not just lists of coefficients. Context. Interpretation. Hypotheses they can test. And you don't need to understand the math to evaluate whether the story makes sense—you need domain knowledge.

Standing on Giants

Epoche doesn't reimplement machine learning. Under the hood, it's sklearn, XGBoost, SHAP, LIME—the same battle-tested libraries that power production systems. Epoche is a different interface to the same foundation.

Think of it like Git: a CLI user and a GUI client are using the same underlying system. Epoche is that GUI layer for ML interpretation—not because the command line is bad, but because different modes of thinking deserve different interfaces. Even experienced engineers benefit when reducing friction between question and answer.

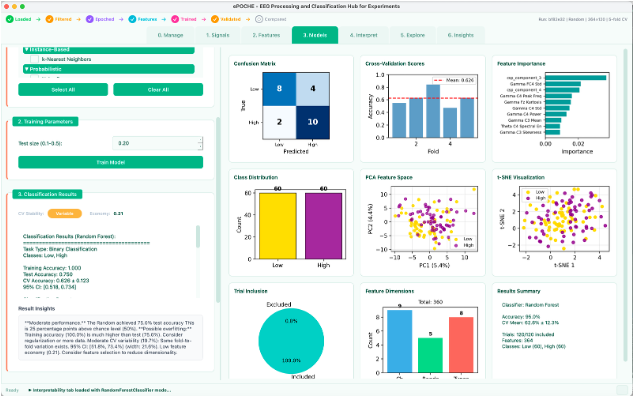

What Epoche Does Differently

Exploration Engine

Most ML tools ask: "which model should I use?" Epoche asks: "what can we learn from all of them?"

The Explore tab treats everything as a parameter. Select any combination of 18 model types. Set each hyperparameter to fixed (single value) or explore (range/list). Run one unified grid search across the entire space.

After individual models finish, Epoche automatically tests ensemble combinations—hard voting, soft voting, weighted voting, and stacking with configurable meta-learners—across all C(n,k) subsets of top models. The results surface as parameter sensitivity heatmaps, ranked ensemble tables, and a Pareto frontier plotting accuracy vs. model complexity vs. training speed. Everything saves to SQLite for reload and comparison.

The design philosophy: researchers shouldn't have to decide between Random Forest and XGBoost before seeing data. They should explore the landscape and let the results inform the decision.

Cross-Model Consensus and Hypothesis Generation

This is where Epoche does something genuinely different. Most tools compare models by accuracy. Epoche compares what different models learn from the same data.

When you run a cross-model comparison, Epoche computes permutation importance for each model, then generates statistical consensus analysis: pairwise Spearman rank correlations between model importances, Friedman tests across 3+ models, and per-feature coefficient of variation to identify which features are stable across architectures and which are model-dependent.

Then it goes further. A hypothesis generator analyzes divergence patterns and produces testable hypotheses:

- Tree-based models weight a feature heavily but linear models don't → "Possible nonlinear interaction. Consider adding explicit interaction features or examining partial dependence plots."

- One model rates a feature much higher than all others → "Possible artifact or overfitting to spurious correlation. Verify this feature is meaningful outside training data."

- Divergence clusters by frequency band → "Multiple frequency-dependent processes may underlie classification. Consider band-specific sub-analyses."

Each hypothesis includes confidence level, supporting evidence, and suggested follow-up tests—framed in EEG/neuroscience context when the features contain channel or frequency information.

Seven Interpretability Methods, Integrated

Epoche integrates SHAP (global and instance-level), LIME, permutation importance, decision rules, unified importance profiles (combining all available methods with percentile-rank normalization), cross-model comparison, and cross-dataset comparison.

The unified importance profile is particularly useful: it extracts importance via every method applicable to the model type (builtin importances for trees, coefficients for linear models, permutation for everything, TreeSHAP when available), normalizes each to percentile ranks for fair comparison, and produces a consensus ranking. When methods disagree, that's information—not noise.

Cross-dataset comparison lets you load two saved interpretations and compare importance patterns: Pearson and Spearman correlations, stable features (important in both), and dataset-specific features. This answers the question: "Is my model learning something generalizable, or something specific to this dataset?"

EEG-Specific Visualizations

Epoche generates topographic brain maps from 10-20 electrode positions, colored by feature importance. A neuroscientist glances at the topomap and immediately recognizes bilateral motor cortex or artifact in temporal channels. Frequency band aggregation shows importance across delta, theta, alpha, beta, and gamma—translating model behavior into the spectral language neuroscientists think in.

Publication-Ready Export

Results export as multi-page PDF (via matplotlib), self-contained HTML (with base64-embedded figures), or LaTeX source with separate figure files. Comparison reports include model tables, figures, statistical tests, and narrative sections—ready for supplementary materials or methods sections.

Database-Backed Persistence

Every model, exploration, ensemble, and interpretation saves to SQLite with full provenance. Six months later, you can load a model, apply a new interpretability method, and compare it to what you're building now. Exploration compounds over time instead of resetting with each new script.

Code Transparency

Epoche doesn't hide the machinery. Every operation logs the actual Python code that executed—not pseudocode, but the real function calls with your parameters. Copy it, modify it, build your own pipeline. This is how you graduate from GUI user to pipeline builder: by seeing working code applied to your actual data.

Who This Is For

Neuroscientists who need to know if their classifier is learning P300 components or eye blinks—and want cross-model consensus to surface the answer.

Clinicians testing whether assessments measure what they claim—with interpretability infrastructure already built.

Students learning ML who need to build intuition for how different models approach the same problem—by seeing it happen with their own data.

Experienced engineers who want integrated exploration and interpretation without context-switching between writing analysis scripts and investigating the actual problem.

Anyone with domain knowledge to contribute who doesn't want to spend months coordinating scattered tools before asking questions of their data.

The Argument I'm Actually Making

We've conflated implementation skill with conceptual understanding, and it's limited who gets to advance the field.

The barrier to entry for applied ML shouldn't be "are you comfortable writing Python?" It should be "do you have questions worth asking?"

This isn't a criticism of any of the libraries that make modern ML possible. Those tools are fundamental—literally, they form the foundation Epoche is built on. But integration matters. Persistence matters. Reducing cognitive load matters.

Concepts matter more than implementation when you're generating ideas. The ideation phase, the hypothesis generation, the "does this make sense?" evaluation—those don't require coding. They require thinking. And they're enhanced by tools that remove friction from exploration.

What I've Learned

I love code. I write C++, Go, Python, TypeScript, Dart. I've built compilers, simulation engines, GPU kernels.

But I've also worked with clinicians, psychologists, neuroscientists. I've seen brilliant insights come from people who would never call themselves programmers. The best work happens when domain expertise and technical capability meet—but those don't need to live in the same person. The tool can be the bridge.

What's Next

This is the sixth post in a series about the tools and platforms I've built. Previous posts covered FONT, BABEL, research vs. production infrastructure, cognitive load and developer experience, and building systems you can inspect and trust.

Epoche extends those principles—build for inspection, make state observable, reduce cognitive load—into EEG analysis and machine learning. If you're a researcher working with EEG data and ML feels like a black box you're supposed to trust, Epoche might be worth exploring. Not because it's simpler, but because interpretation and exploration are first-class concerns—and stories emerge from what your models actually learned.

This post is part of a series on building tools that bridge technical capability with human understanding.