MUSE Ecosystem · Gothic Grandma LLC

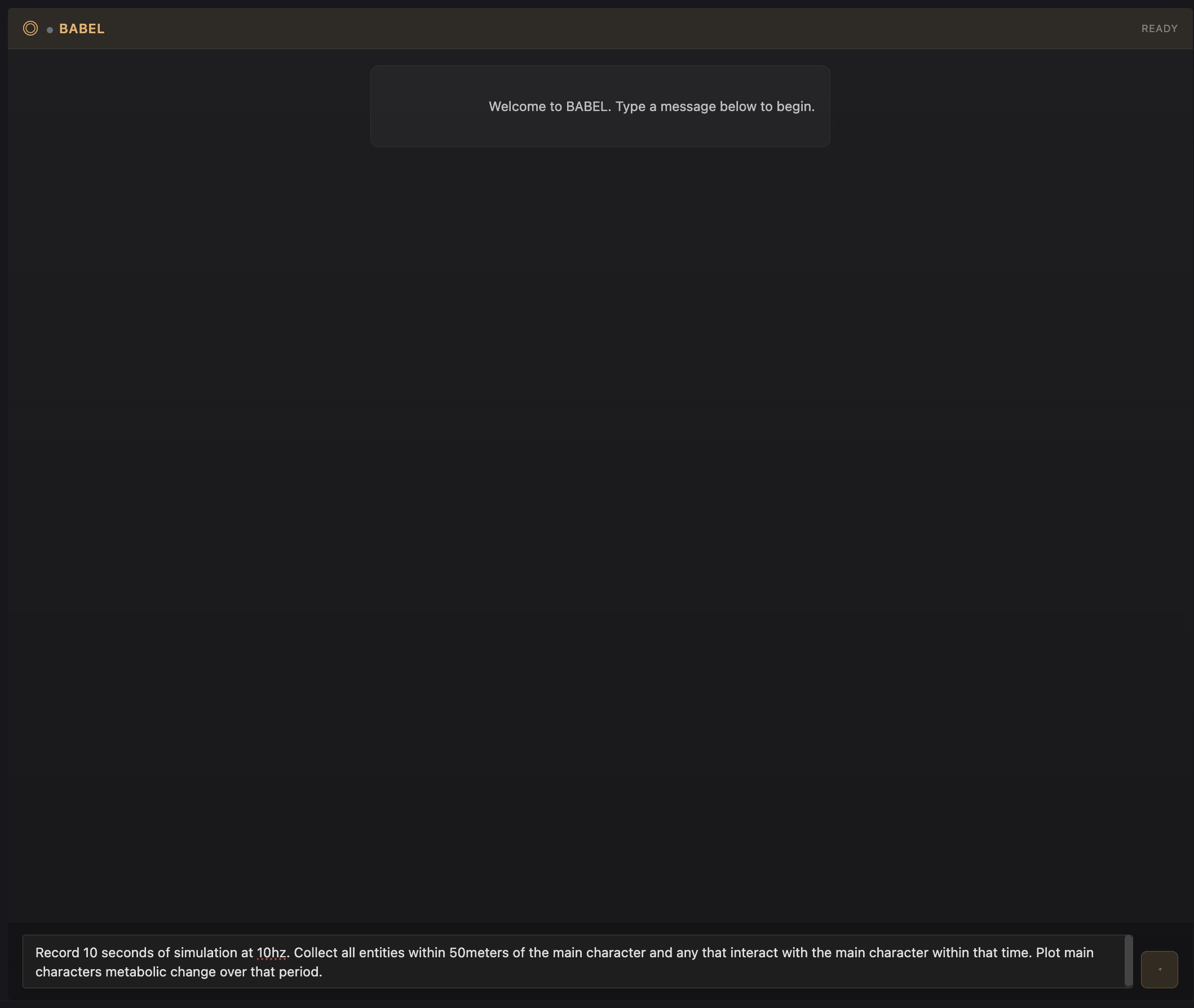

BABEL

Semantic Interface

What Problem It Solves

The MUSE ecosystem has a semantic gap: humans think in natural language while the platform operates through structured commands, entity references, and system parameters. Different roles need different capabilities—narrative generation for readers, code assistance for developers, system design guidance for kernel engineers—but they all share the same underlying need: intent parsing → entity resolution → command dispatch.

BABEL bridges this gap as a semantic platform interface: an NLP pipeline that parses natural language into structured intents, resolves references against the entity registry via vector similarity search, and dispatches validated commands with permission-aware routing—never inside the simulation loop.

How It Works

COMMANDS

- RecordSimulation(duration, hz)

- CollectEntities(radius, filter)

- PlotData(metric, target)

SYSTEMS

- MetabolismSystem

- CardiovascularSystem

- EmotionalValenceSystem

ENTITIES

- main_character (ref)

- nearby_entities[] (spatial)

- interaction_targets[] (event)

PERMISSIONS

- ✓ GLYPH (engineer): full R&D access

- GRIM: templates only

- GLYPH (viewer): read-only state

Permission-Aware Dispatch — Role-Scoped Command Sets

Each role receives a scoped command set routed by BABEL's permission-aware dispatcher

- GRIM (readers): Narrative generation — simulation state → prose, reader input → world influences

- GLYPH / development: Code assistance — codebase-aware code generation, terminal integration, Claude Code bridge

- GLYPH / kernel engineering: System design — architecture suggestions, biological model explanations, performance analysis

- CALLIOPE: User support — library navigation, world discovery, troubleshooting

LLMs at Boundaries Only — All Logic Deterministic

- Input translation — natural language → simulation commands

- Output narration — simulation state → readable prose

- Never in the loop — all world logic is deterministic FONT execution

- No hallucinated state — BABEL reads from simulation, doesn't invent

- Role-based permissions — command resolution respects user role (reader, creator, engineer, researcher) with different access levels

42 Languages via Qwen, Llama, Mistral, Aya-23

Smaller, efficient models power player-facing narrative generation across 42 languages

- 42 languages — Living Worlds accessible globally

- Cultural adaptation — not just translation, contextual localization

- Consistent voice — character personalities preserved across languages

- Model selection: Qwen 2.5 7B, Llama 3.1 8B, Mistral 7B, Aya-23 8B — optimized for fast, natural dialogue

Local-First Multi-Model Orchestration (3B–32B)

In-house workbenches use larger models where inference cost is acceptable for enhanced development capability

- Qwen 2.5 32B — deeper reasoning for code generation, architecture analysis, and system design

- Higher latency acceptable — development workflows tolerate longer response times for better quality

- Complex task handling — multi-step reasoning, codebase-aware suggestions, technical documentation

Inference Layer

Multi-model orchestration: local models sized by task complexity, privacy-first with no cloud dependencies

- Local inference: llama.cpp with Metal/CUDA acceleration, GGUF quantized models (Q4_K_M, Q5_K_M)

- Privacy-first: All inference runs locally — no cloud API dependencies, full data sovereignty

- Model routing: Intent complexity determines which local model handles the request

- Context management: Sliding window with relevance-weighted history

Multilingual Model Collection — Qwen, Llama, Mistral, Aya

- Qwen 2.5 7B — primary multilingual (Chinese, Japanese, Korean + Western)

- Llama 3.1 8B — Western languages (English, Spanish, French, Portuguese, German)

- Mistral 7B — European-focused (French, German, Italian, Spanish)

- Aya-23 8B — African + underrepresented (Swahili, Hausa, Yoruba, Amharic, Somali, Zulu)

Architecture

BABEL operates as a semantic service layer accessible to all MUSE workbenches:

- NLP pipeline: spaCy tokenization, dependency parsing, named entity recognition

- Vector index: FAISS store of command embeddings (sentence-transformers) for similarity-based resolution

- Permission routing: Tool-scoped command sets with role-based access control

- Multi-model dispatch: Complexity-based routing between local models (3B–32B parameters)

- Response types: Analysis, Command, Discovery, Visualization, Text

Semantic Search

Vector-based retrieval for commands, entities, and documentation

- Embedding model: sentence-transformers for intent and entity vectorization

- Index: FAISS flat index over command registry + entity catalog

- Retrieval: k-NN similarity search with confidence thresholds

- Learning: Successful resolutions cached as intent→command mappings

Critical Design Principle

Impact

- Semantic interface to complex simulation without sacrificing determinism

- Unified NLP pipeline across all MUSE workbenches with tool-scoped permissions

- Vector-based command resolution eliminates brittle keyword matching

- Global accessibility through multilingual model collection (42 languages)

- Clear separation: LLMs handle interface semantics, FONT handles world truth